GPU Kernel Optimization

Write custom CUDA/Triton kernels for 10 KernelBench problems spanning element-wise ops, fused operator chains, and full transformer blocks. Each solution must produce correct outputs and run faster than the PyTorch reference.| Metric | Baseline | + Leeroopedia |

|---|---|---|

| Geometric mean speedup | 1.80x | 2.11x (+17%) |

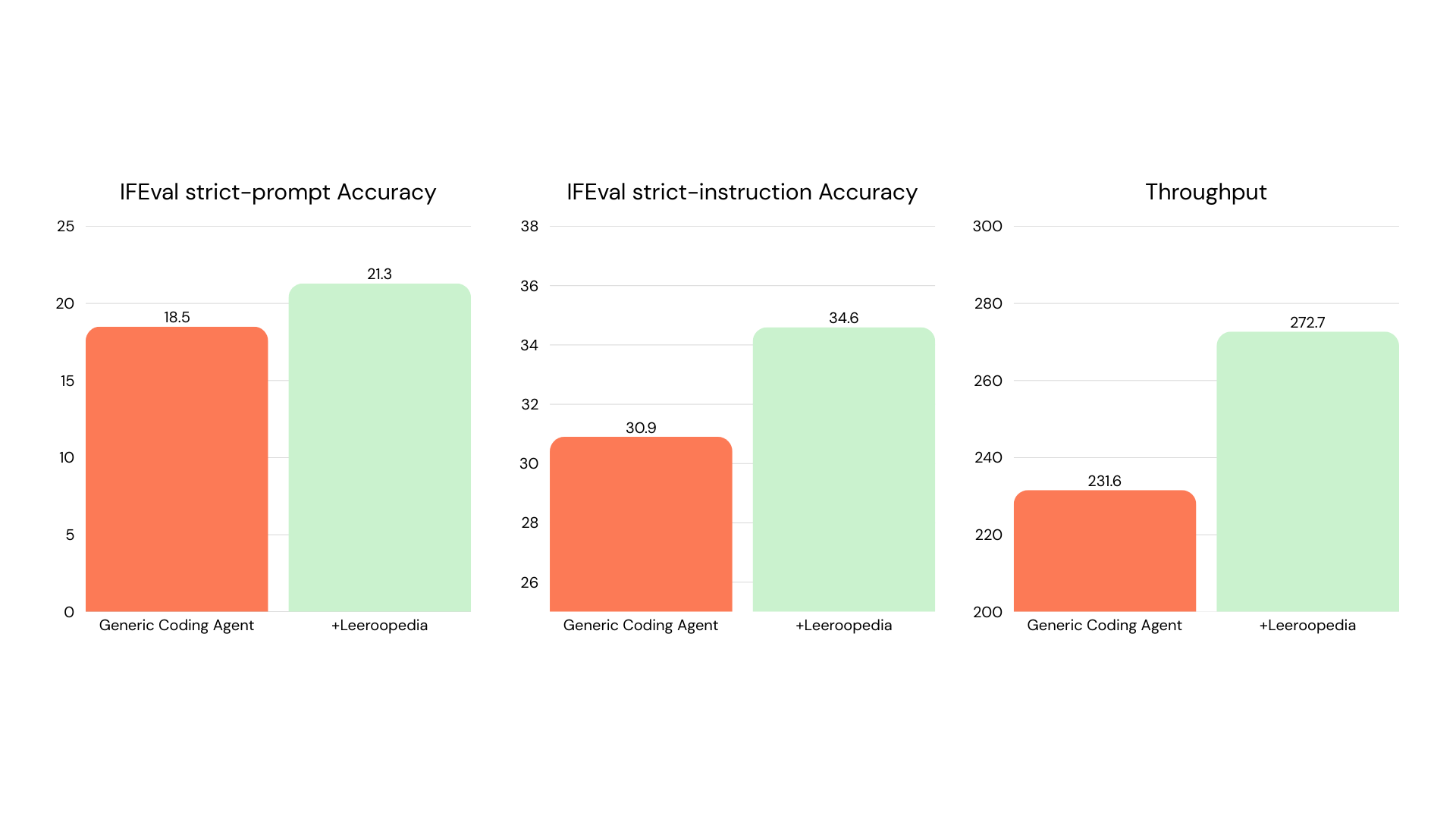

LLM Post-Training

Implement a complete SFT + DPO fine-tuning, LoRA merge, vLLM serving, and IFEval evaluation pipeline forQwen/Qwen2.5-1.5B on 8×A100 GPUs.

| Metric | Baseline | + Leeroopedia |

|---|---|---|

| IFEval strict-prompt accuracy | 18.5 | 21.3 |

| IFEval strict-instruction accuracy | 30.9 | 34.6 |

| Throughput | 231.6 | 272.7 |

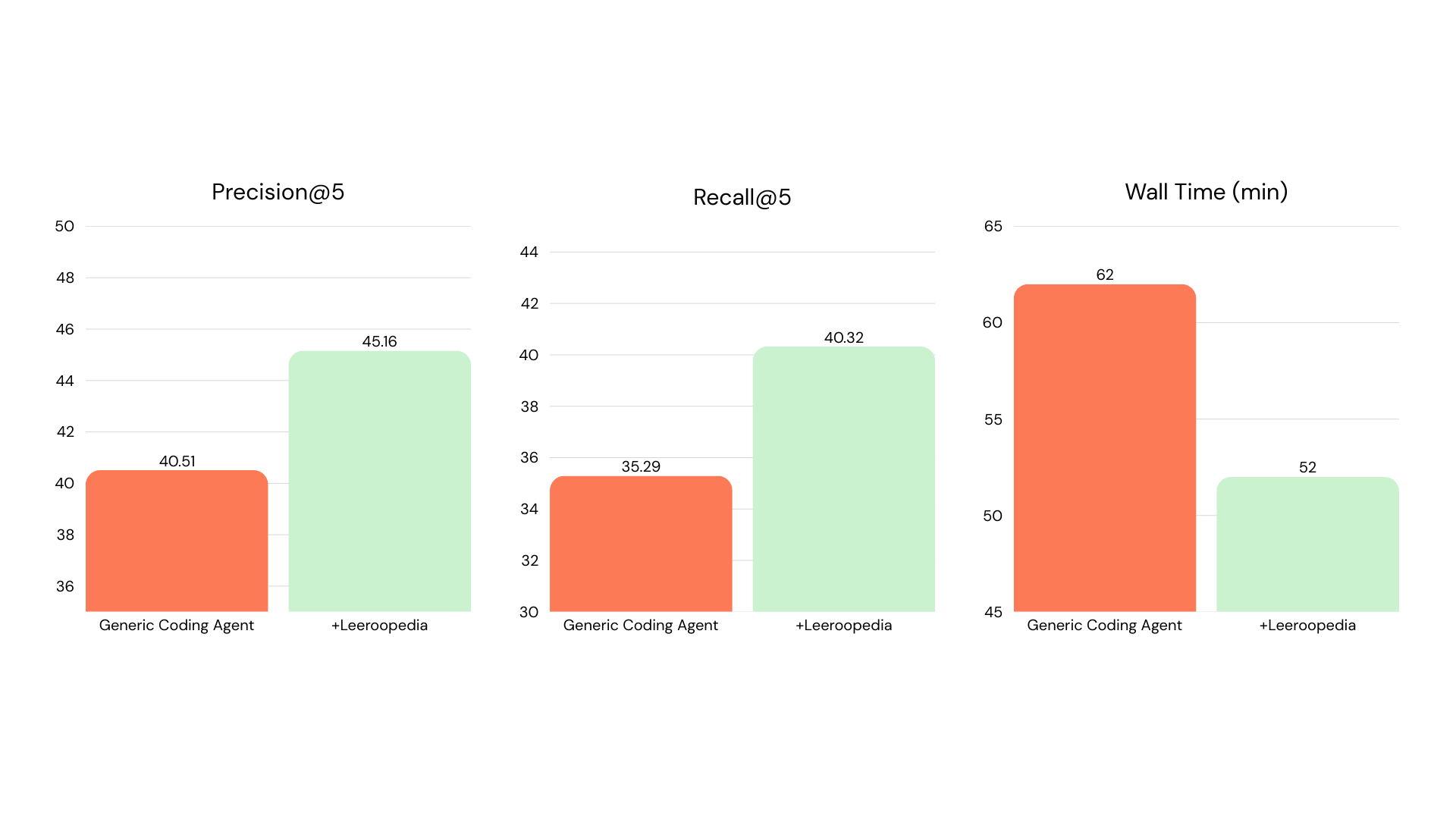

Self-Evolving RAG

Build a FastAPI service that ingests a corpus, answers questions via hybrid retrieval-augmented generation, and automatically improves itself over multiple evolution rounds by diagnosing retrieval failures, re-chunking documents, and adapting query strategies.| Metric | Baseline | + Leeroopedia |

|---|---|---|

| Precision@5 | 40.51 | 45.16 |

| Recall@5 | 35.29 | 40.32 |

| Wall time | 62 min | 52 min |

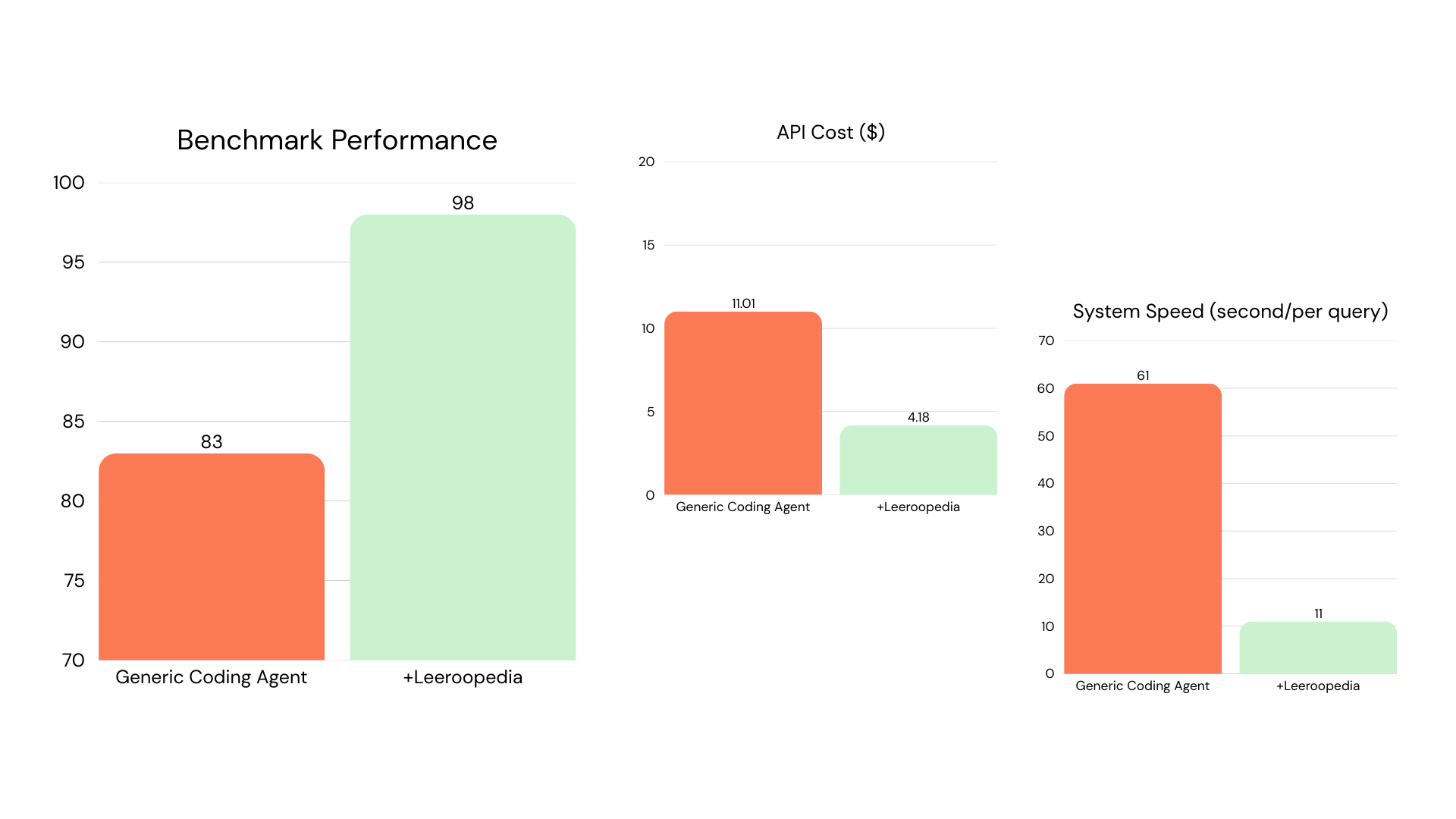

Customer Support Agent

Build a multi-agent system that classifies 200 customer support tickets into 27 fine-grained intent categories using agent handoffs, state persistence, and structured output.| Metric | Baseline | + Leeroopedia |

|---|---|---|

| Benchmark performance | 83 | 98 |

| System speed (sec/query) | 61 | 11 |

input_type, a framework-specific pattern in the OpenAI Agents SDK. The baseline used fragile regex extraction from free text, while the KB agent registered each handoff with a Pydantic model that the SDK enforces at handoff time. The KB also taught proper state serialization and output_type usage.

→ Full results and replication instructions